How to name your AI agents (and why the ones with names get all the work)

Claude, Devin, Jules, Goose, Harvey — every AI agent people actually call by name went out the door with a human handle, not a version string. A short, warm read on why names matter, a lineup of real named agents in production, and three rules we now use when we ship a new one.

On this page

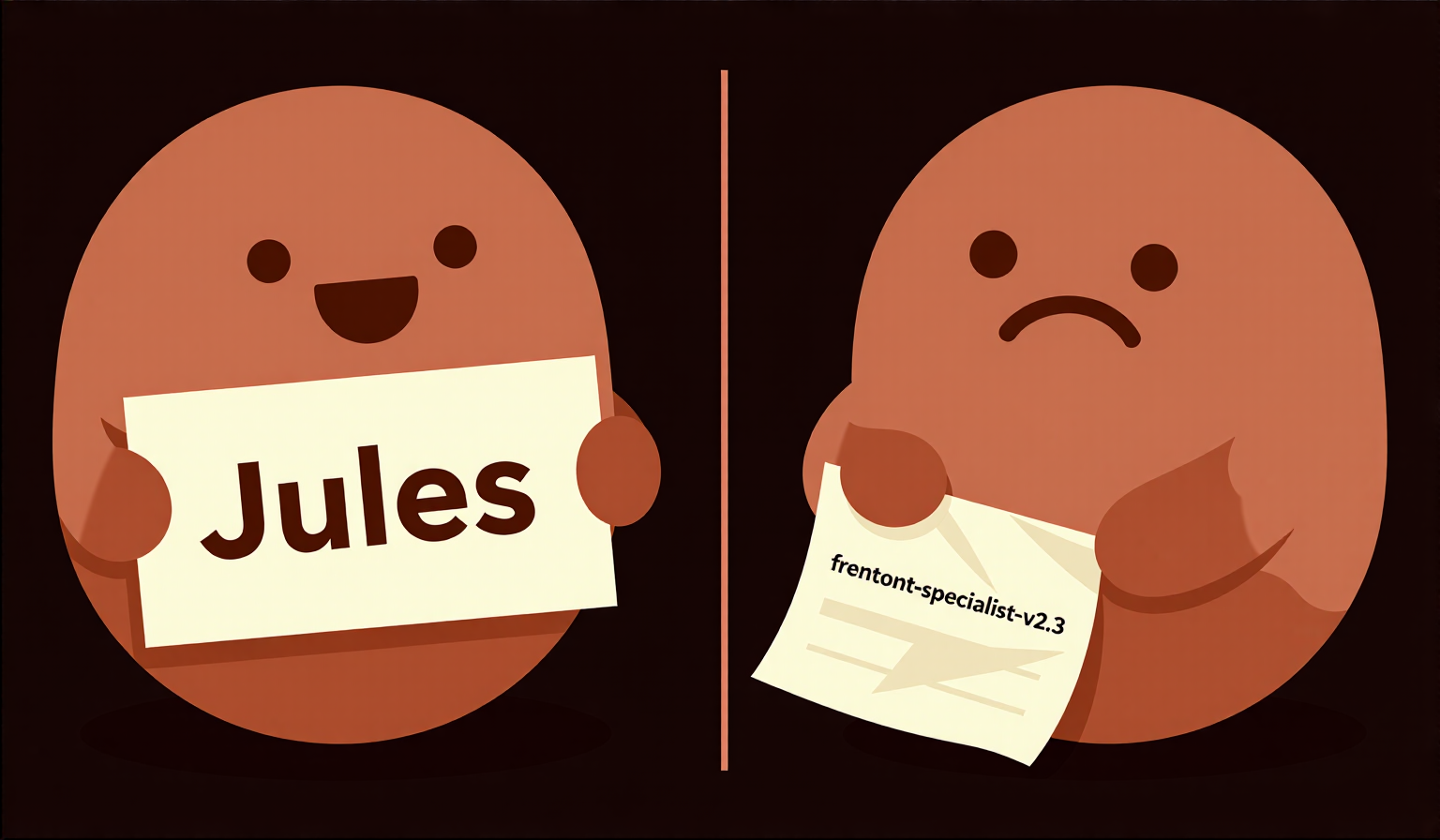

Three agents walk into a chat. Two have names. The third is called frontend-specialist-v2.3. Guess which one is still waiting to be useful.

Every AI team eventually runs into this. You spin up an impressive roster of specialists, and a few of them get called all the time while the rest sit in the dropdown like forgotten Slack channels. The difference, nine times out of ten, isn’t capability. It’s names.

Why the named AI agents win

Anthropic didn’t call their model assistant-v3. They called it Claude, after Claude Shannon — the father of information theory. That choice wasn’t branding fluff. A name gives people somewhere to put their expectations. It’s a handle.

Cognition did the same when they shipped Devin in March 2024 and called it the "first AI software engineer". Whether or not you buy the claim, the name did the marketing for them. You remember Devin. You do not remember the number at the end of a config file.

Humans delegate to characters, not to slugs. We always have. A name is the cheapest possible mental model.

A short lineup of AI agents people actually call by name

Not a ranking, just a cast list. Every one of these went out the door with a real name attached, and every one of them gets talked about in meetings because of it.

Claude — Anthropic, 2023. Named after Claude Shannon. A name that sounds like someone you’d ask for advice.

Devin — Cognition Labs, March 2024. Pitched as an autonomous software engineer; went viral on launch day.

Jules — Google Labs, GA in August 2025. An async coding agent that lives in your GitHub repo. Short name, one job.

Goose — Block’s open-source agent, Apache 2.0. The name sounds like a workhorse, and that’s what it is.

Harvey — Counsel AI, used at more than half of the AmLaw 100. You hear the name and picture a lawyer. That’s the whole point.

Aider — open-source terminal pair programmer. Not a person’s name, but it reads like a role, not a version string. That’s enough.

Three rules for naming AI agents that stuck

1. One word, speakable. Claude, Devin, Jules, Goose, Harvey. You can say "hey Jules, open a PR" in Slack without feeling like you’re reading a build log.

2. Suggest the role, not the tech. Harvey feels like a lawyer before you’ve read a single feature. Goose feels like something you put to work. frontend-specialist-v2.3 feels like a JIRA ticket.

3. No version numbers. Ever. Nobody has ever said "let Claude-v3-turbo-preview handle it." When a prompt evolves, let the character evolve with it — or retire the agent and bring in a new one with a new name. Teammates, not SKUs.

The mirror moment

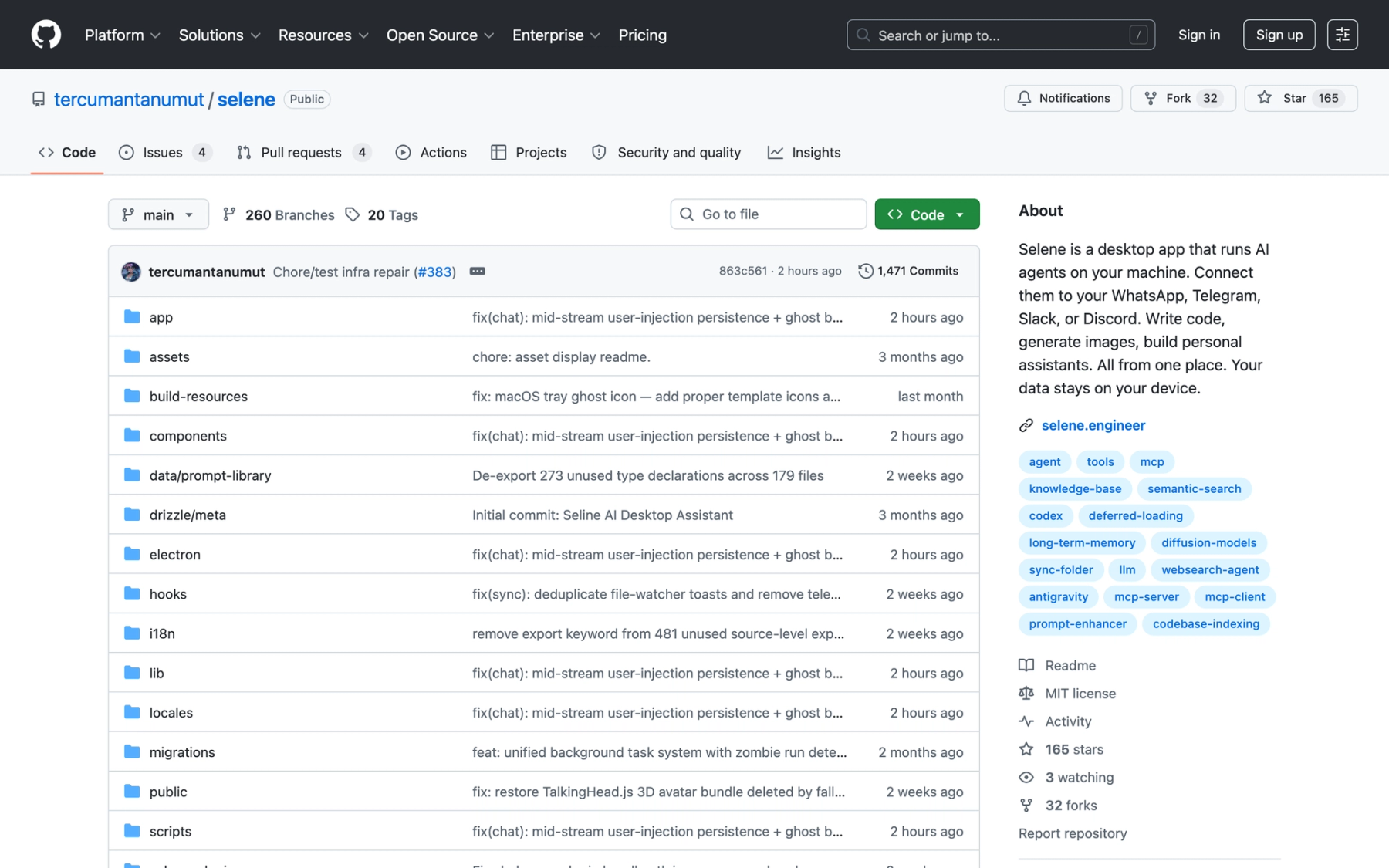

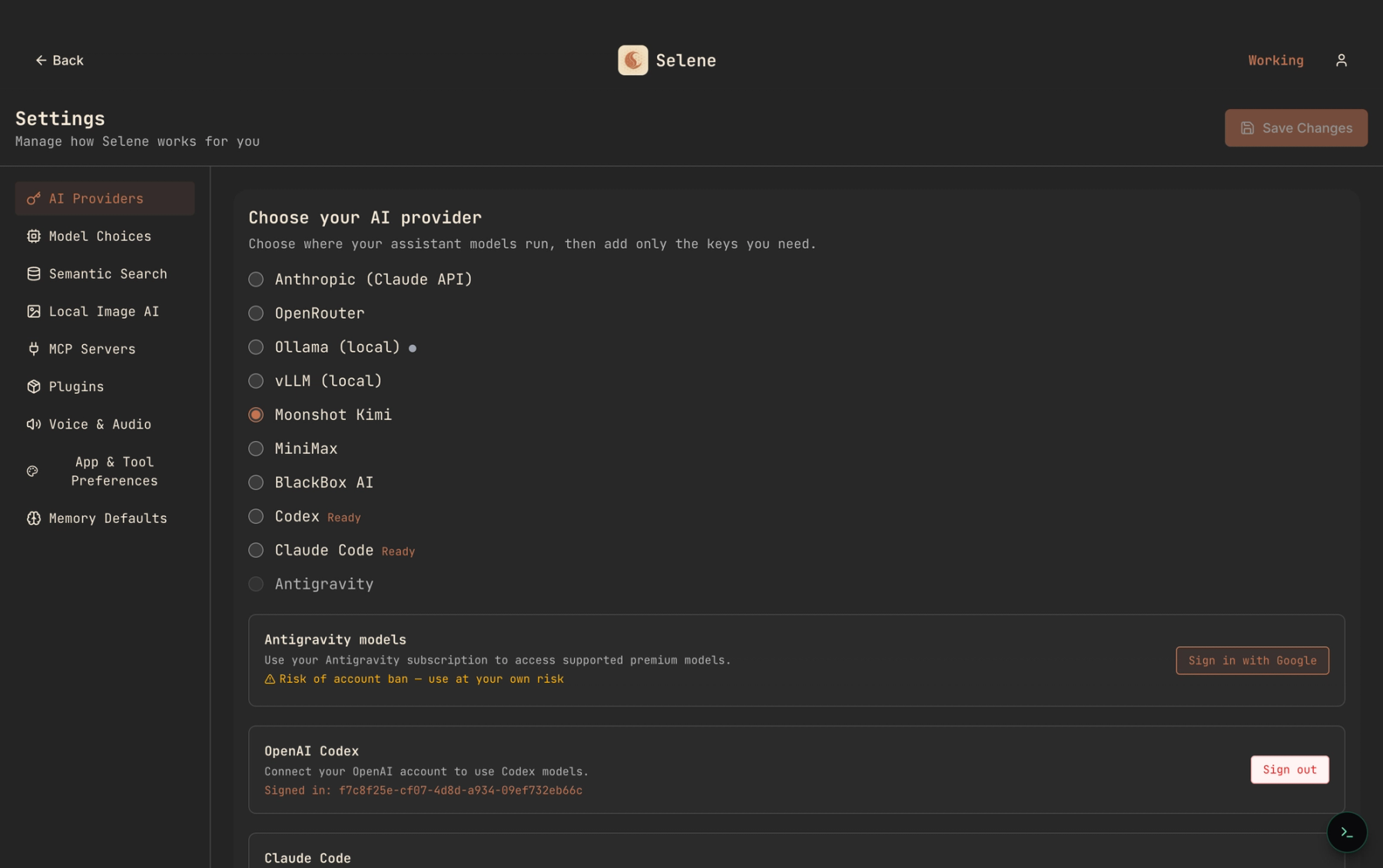

We write this post and then we look at our own workflow. In Selene’s System Specialists, our agents are called Explore, Plan, Frontend Developer, Backend Architect. One of those passes the rules. Three of them don’t.

Naming is a live project for us too. That’s kind of the joke and kind of the point — the right names almost always come after the agent has been doing the work for a while.

The party-game test

Here’s the vibe check. Picture your agents playing one of those role-guessing games — vampire, villager, the quiet one who always turns out to be the werewolf. Can you tell who’s who from their names alone?

If yes, your team has character. If no, you have a spreadsheet.

Naming agents feels silly at first. It’s a little like naming your Roombas. Do it anyway.

The ones you name get work. The ones you don’t sit in the dropdown forever. Give them faces. You’ll ship more — and the humans on your team will stop dreading the handoff.