How to predict your AI coding costs in 2026: a practical model for teams

Most AI coding bills look unpredictable because the pricing is built out of synthetic credits on top of real tokens. Here is a four-variable cost model, verified Anthropic and OpenAI rate cards, and an honest monthly bill for a five-developer team — $368 uncached or roughly $150 with prompt caching, not $3,000.

On this page

Every engineering manager asks a version of the same question at the start of the month: what is my team going to spend on AI coding tools this month? And every manager discovers, by the 18th, that the answer was wrong.

Predictable AI coding pricing isn’t about being cheap. It’s about being boring — the same way your AWS bill is boring, the same way payroll is boring. A budget line you can defend to finance without a spreadsheet full of footnotes.

This post is a practical cost model for 2026. Real per-million-token prices from current Anthropic and OpenAI docs, four variables any team can plug in, and an honest monthly bill for a five-developer team.

Why predictable AI coding pricing is so hard in 2026

Three things changed in the last eighteen months that made forecasting a mess.

First, the unit of work moved. A "request" used to mean a single chat turn. Now a single request can fan out into an agentic loop with tool calls, sub-agents, and multi-file edits — each burning tokens you didn’t plan for.

Second, the model tiers multiplied. Anthropic alone now lists Opus 4.7, Opus 4.6, Opus 4.5, Sonnet 4.6, Sonnet 4.5, and Haiku 4.5 as current pricing tiers on the official pricing page. OpenAI has the GPT-5 family spread across GPT-5, 5.1, 5.2 and 5.4. Each tier is a different dollars-per-token reality.

Third, the bundling layer lies to you. Credits, "messages", "premium requests", "fast requests" — these are all synthetic units a middleware vendor picks to make the bill feel stable. The underlying tokens aren’t stable, they’re just hidden.

Credits, tokens, and dollars are not the same thing

Most AI coding billing is a three-layer sandwich, and almost everybody gets confused at the middle layer.

Layer one is dollars you pay the vendor. Either a flat monthly seat, or a topped-up credit balance, or a direct API invoice.

Layer two is the vendor’s synthetic unit. Credits, points, premium messages. This layer exists to make the bill feel predictable even when the underlying cost isn’t.

Layer three is tokens actually sent to a model. This is the only layer that’s real. Every dollar you spend, somewhere, lands as a number of input and output tokens at a provider rate card.

If your pricing plan hides layer three, you can’t forecast it. You can only hope the vendor’s abstraction holds until the next repricing announcement.

Three pricing models, three kinds of forecasting risk

Flat-rate subscription. One seat, one number. Claude Code sits here for individuals — a known monthly charge and you stop thinking about it. Easy to forecast, at the cost of soft rate limits when you exceed the fair-use envelope.

Credit or usage-based. You buy credits, each keystroke slowly drains them. Augment Code and Cursor both operate in this shape for team tiers. Pros: you only pay for what you use. Cons: the meter is always running, and a policy change can silently shift the exchange rate between credits and real work.

BYOK on top of a platform. You bring your own Anthropic or OpenAI key. You pay Anthropic or OpenAI directly for the tokens. The middleware is free, or self-hosted, or a flat fee. No synthetic unit in between.

All three can be reasonable. The question is which one lets you answer the EM’s question without a sheepish apology by mid-month.

A four-variable cost model you can actually compute

Here is the whole thing, in one line, fits on a whiteboard:

monthly cost ≈ tokens_per_prompt × prompts_per_dev_per_day × devs × working_days × price_per_token

That’s it. Five factors if you split token price into input and output, four if you blend. Every AI coding bill on Earth reduces to this expression — the vendors just add abstraction layers on top.

Sensible defaults for each variable, for a coding-agent workload in 2026:

tokens_per_prompt. This is the variable most forecasts get wrong. A real coding-agent task in 2026 reads 30,000 to 75,000 input tokens — the agent pulls relevant files, prior messages, test output, and tool results into context before it writes a single line back. For a lightweight Haiku 4.5 task: about 30,000 input + 1,000 output. For a heavier Sonnet 4.6 task with deeper codebase context and multi-file edits: 60,000 input + 3,000 output. If your working estimate is 5,000 tokens per prompt, you’re modelling chat, not coding.

prompts_per_dev_per_day. Instrument it for your team. Most engineering orgs land somewhere between 20 and 60 meaningful prompts per active developer per working day. Skim-scrolls don’t count.

devs and working_days. Devs is easy. Working days: 20 is the honest monthly number, because you will not code on Saturdays and you will not code when you are sick, and neither will your team.

price_per_token. Take it straight from the source. Anthropic publishes it here. OpenAI publishes it here. No middleware, no translation.

A five-developer team’s honest monthly bill

Let’s plug in real numbers. Five developers, 20 working days, 40 meaningful prompts each per day. That’s 4,000 prompts a month.

Split 70/30 between a fast tier and a smart tier — most teams find this shape naturally, because you don’t want Opus for a variable rename. So: 2,800 Haiku-tier prompts, 1,200 Sonnet-tier prompts.

Haiku 4.5 side at $1 input / $5 output per million tokens:

• 2,800 prompts × 30,000 input tokens = 84M input × $1 = $84

• 2,800 prompts × 1,000 output tokens = 2.8M output × $5 = $14

Haiku subtotal: $98.

Sonnet 4.6 side at $3 input / $15 output per million tokens:

• 1,200 prompts × 60,000 input tokens = 72M input × $3 = $216

• 1,200 prompts × 3,000 output tokens = 3.6M output × $15 = $54

Sonnet subtotal: $270.

Team total, uncached: $368 per month. About $74 per developer.

Now turn on Anthropic’s prompt caching — which charges cache reads at 10% of input price. This is where the 30K-75K read size actually works in your favor: most of those tokens are codebase files, prior turns, and system prompt — the exact stuff that gets re-read every prompt. With a warm cache, 70 to 90 percent of your input tokens are cache reads at one-tenth the price.

Real team with caching on: $130 to $180 per month. About $26 to $36 per developer.

Your team may be heavier than this. Double every input count and you’re still under $750 a month uncached, or roughly $300 with caching — for five developers doing real work all month. Sanity-check your vendor’s invoice against those numbers.

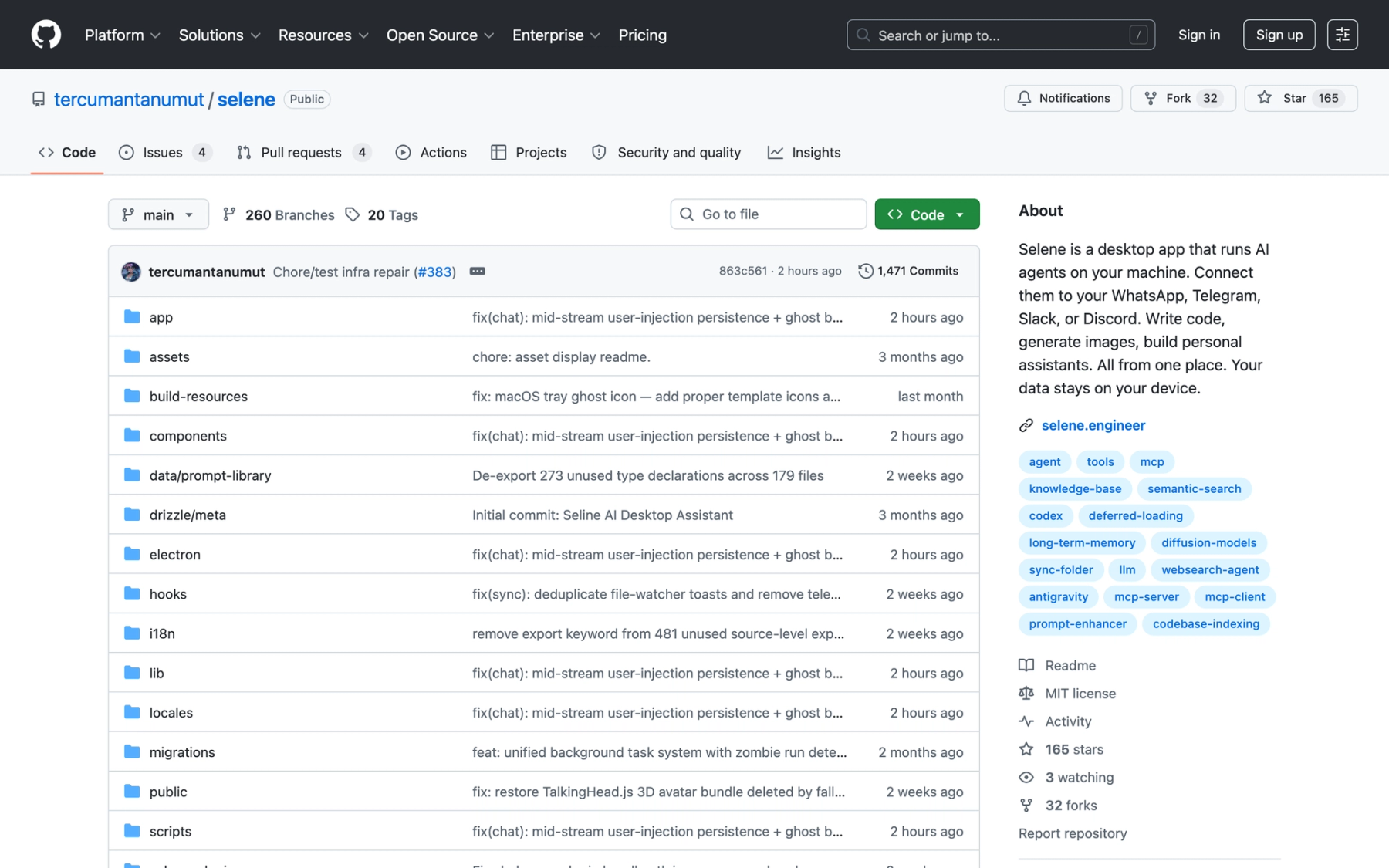

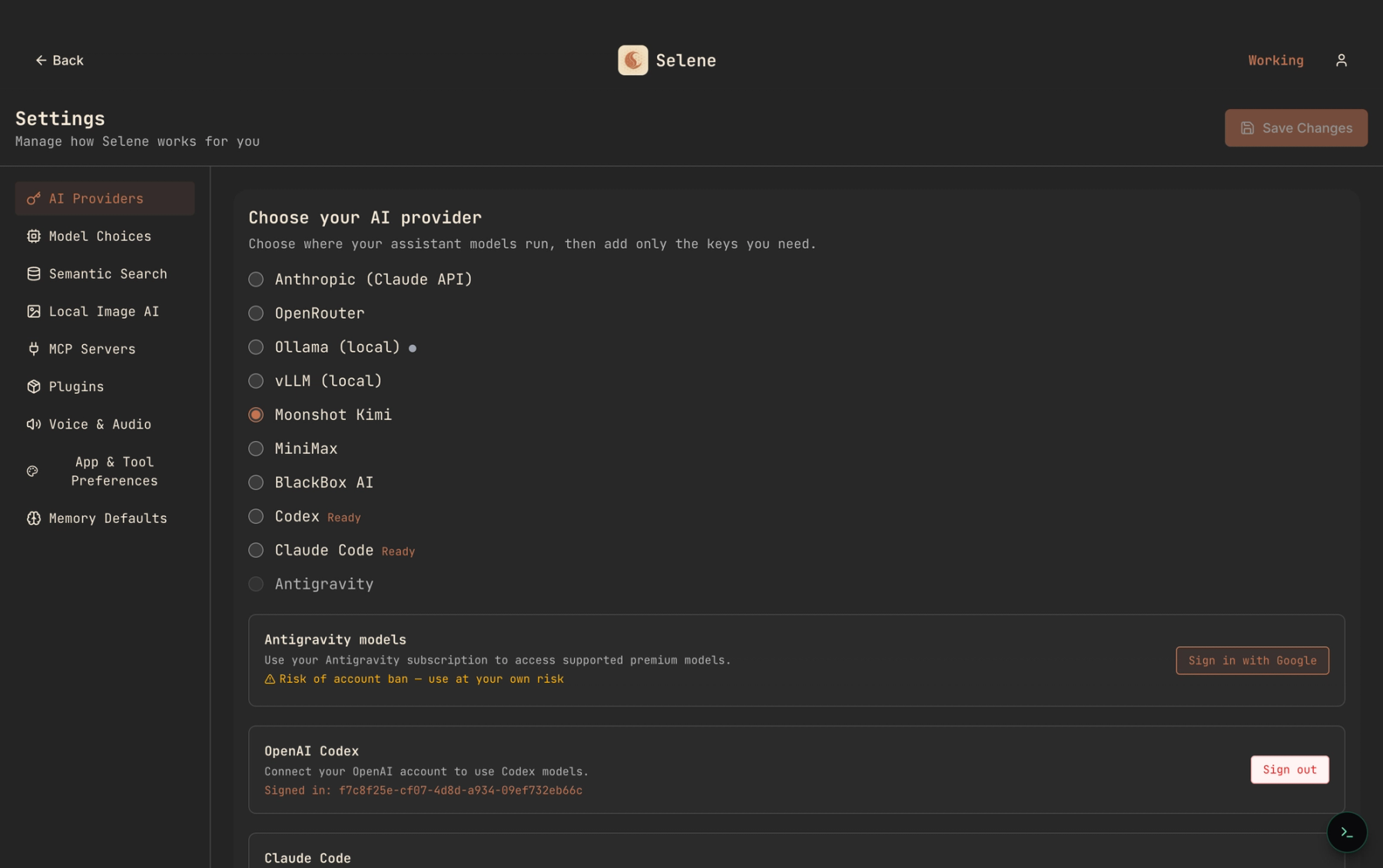

Where Selene and BYOK fit in all this

Selene is open-source, self-hosted, and BYOK. The math above is the math you actually pay — plus whatever compute you run Selene on, which for most teams is a laptop or a small container.

No synthetic credit. No repricing announcement. No "premium request" counter that drains faster when the next model ships. The middleware layer is free because the middleware is yours.

That doesn’t make Selene the right tool for everyone. Individual developers who love one flat charge and never want to think about tokens are genuinely well-served by a seat-based subscription. Teams who actively want someone else to manage a retrieval stack for them have reasonable options too.

But if you’re the EM trying to answer what-will-we-spend-next-month — BYOK on top of your own platform is the one pricing model where you can read your answer straight from a provider rate card. No middleman, no footnote.

A predictable bill is a design choice

You cannot forecast what you cannot see. The first step toward predictable AI coding pricing is dragging layer three — real tokens at real provider rates — up to the surface where you can actually plan against it.

Do the math on a whiteboard once. Pin the number to your channel. Let your team ship without checking the meter.