Apr 21, 2026 • 7 min read

How to predict your AI coding costs in 2026: a practical model for teams

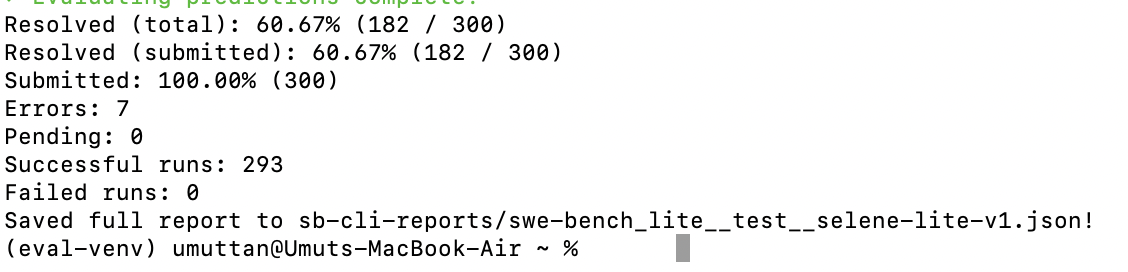

Most AI coding bills look unpredictable because the pricing is built out of synthetic credits on top of real tokens. Here is a four-variable cost model, verified Anthropic and OpenAI rate cards, and an honest monthly bill for a five-developer team — $368 uncached or roughly $150 with prompt caching, not $3,000.