Owning your AI agent platform: why we built Selene open, BYOK, and self-hosted

A short, honest note on how AI coding tool pricing evolved over the last eighteen months — and the five architectural decisions (open source, BYOK, model-agnostic per-task models, open MCP tool protocol, real multi-agent delegation) that we made to give Selene users a platform they can depend on for years.

On this page

The AI coding tool market matured fast over the last eighteen months. A year and a half ago, most of us were choosing between a handful of editor plugins with simple per-seat pricing. Today, the same category has split into credits, tokens, context-charged background work, and tiered subscription plans that can look very different from one invoice to the next. That evolution is natural — running frontier models is expensive, and providers are still learning how to price agentic workloads. It also creates a quieter question that every team using these tools will eventually have to answer: how much of your workflow do you want to depend on pricing decisions you do not control?

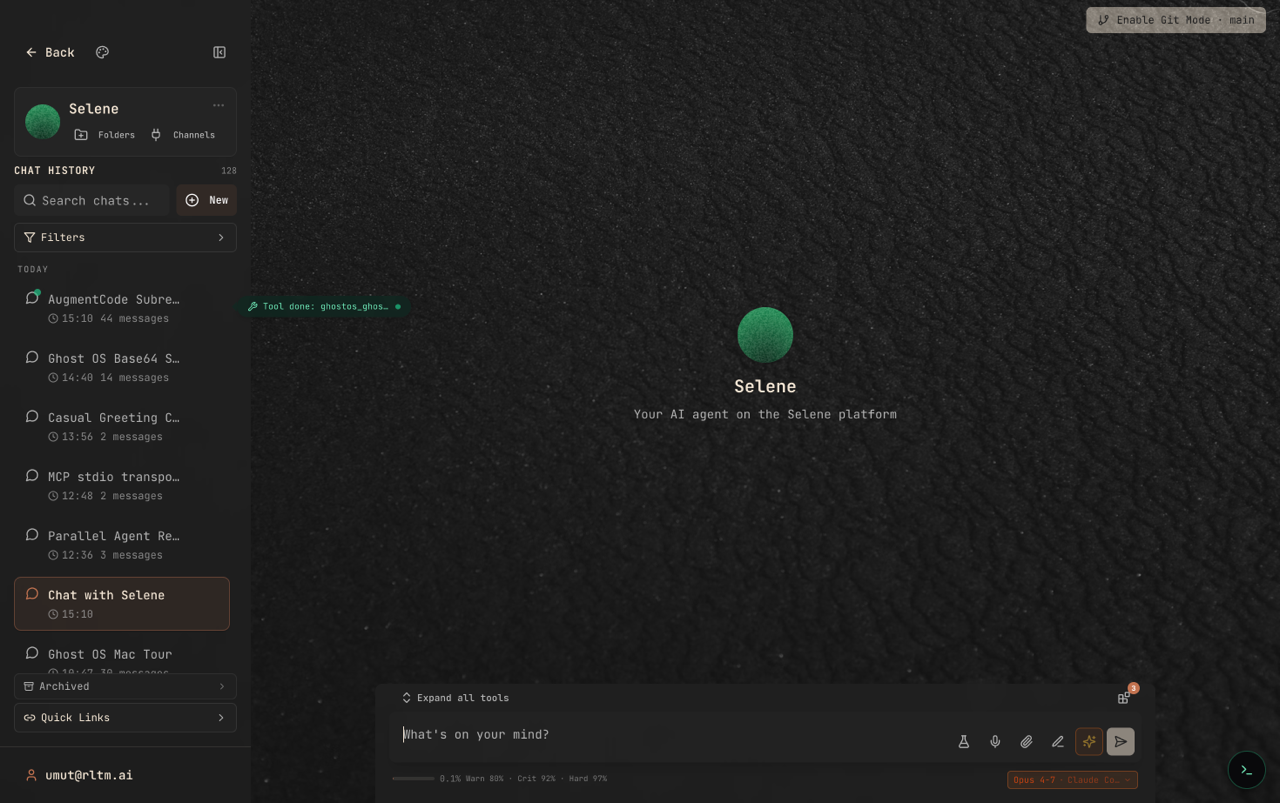

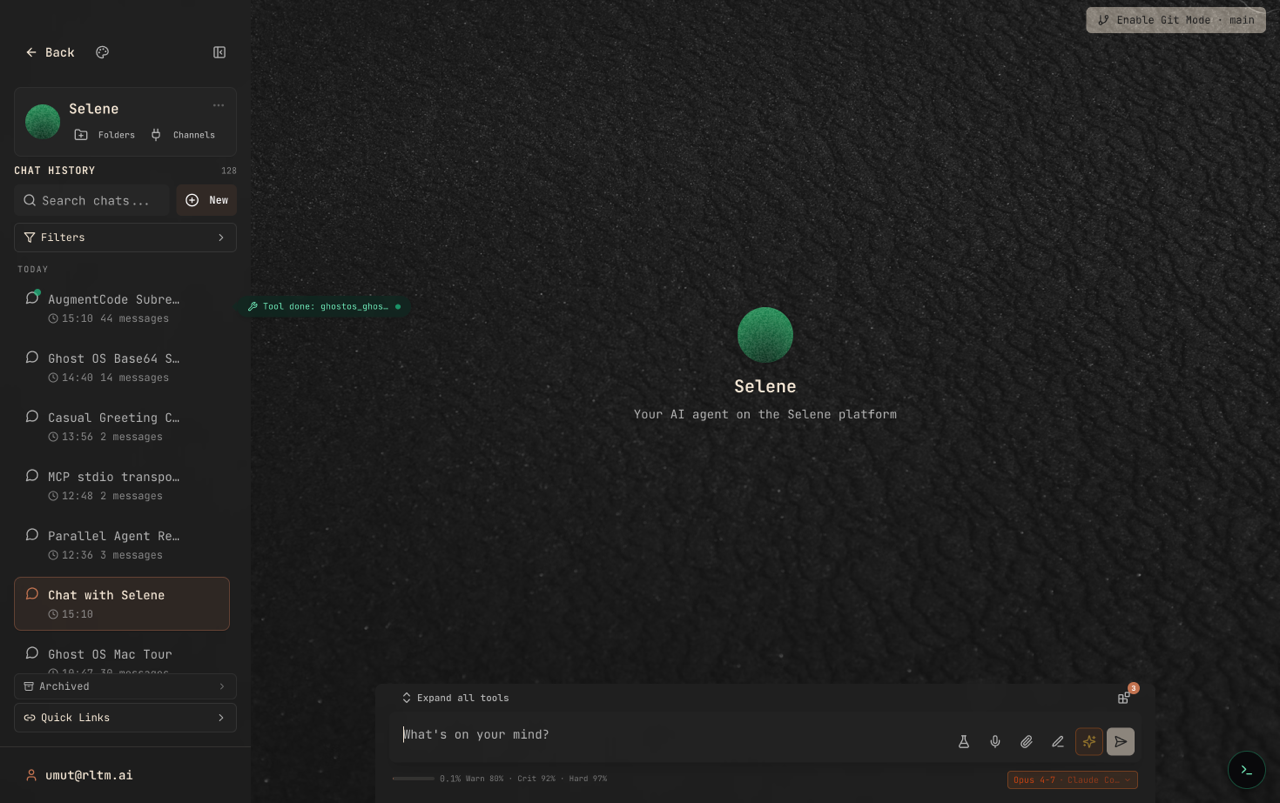

This post is a short, honest note about that question, and about the architectural choices we made for Selene in response to it. Every screenshot below is from the app itself, captured during the session that produced this article.

What we kept hearing from users

While we were researching a content series, we spent time reading developer communities — r/AugmentCodeAI, r/cursor, the Cody forum, and a few others. These are engaged, thoughtful communities built around tools whose teams are clearly trying to serve them well. The conversations we found were not a pile-on; they were people thinking out loud about the same handful of concerns:

Pricing models that change faster than the product does, making it hard to budget quarter-over-quarter.

Features that come and go between releases, with no way to pin a version you rely on.

No graceful story when an upstream model provider has an outage — the whole agent stops working.

A sense that the tool sits between the user and the model in ways that are not easy to see.

None of these are unique to any one product. They are a family of issues that shows up whenever a closed, subscription-based tool becomes load-bearing in someone's day-to-day workflow. The more valuable the tool, the higher the cost of that dependency. These are good, hard problems, and the vendors in this space are working on them.

The choice we made with Selene

We wanted to build the kind of agent platform we would be comfortable depending on for years. That led to four decisions that shape almost everything about how Selene works — and they are deliberately different from the default shape of a modern AI coding tool.

Open source, end to end

Selene is AGPL-licensed and published in full on GitHub. The agent loop, the memory system, the tool router, the skills runtime, the plugin lifecycle — all of it is readable code. If you want to understand exactly how a decision was made, you can trace it. If you want to pin a version from six months ago because a newer release changed a feature you relied on, you can. The product cannot be quietly retired, and a future rewrite of its pricing cannot reach backwards into your past work.

Bring your own key

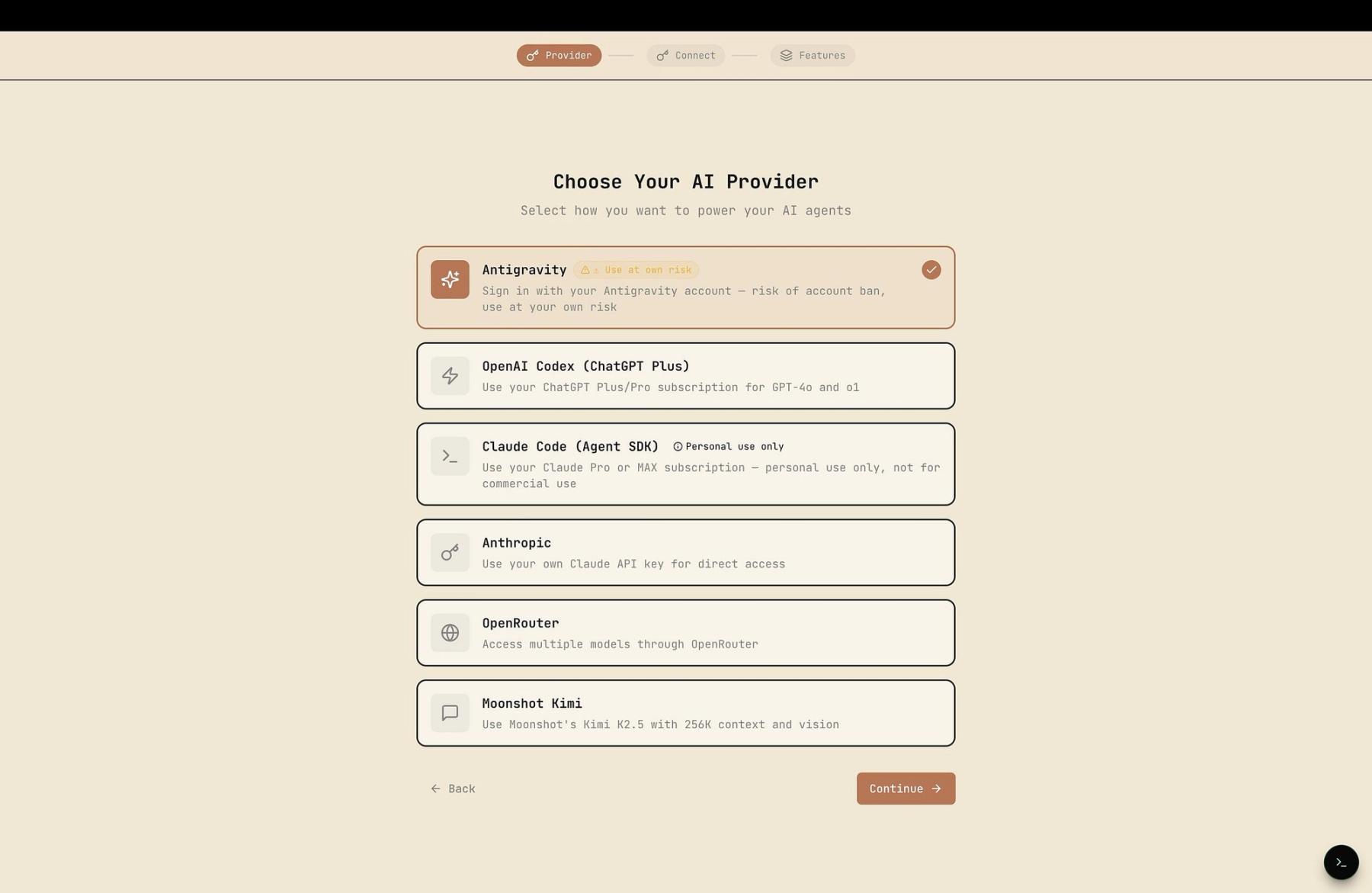

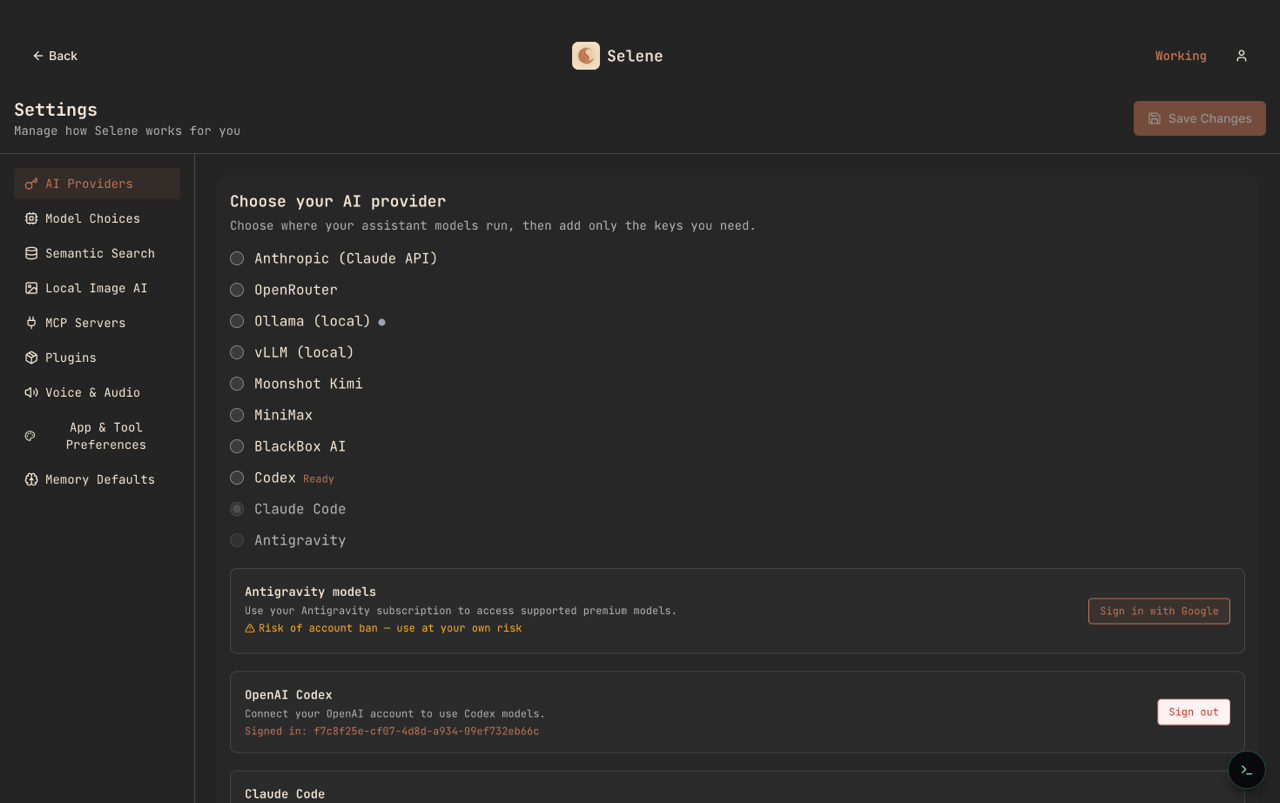

There are no Selene credits. You connect Selene to your own Anthropic, OpenAI, Google, or local-model endpoint, and the bill arrives from that provider, in that provider's units, at that provider's price. We never sit in the middle of the billing meter. The provider list below includes hosted APIs alongside local runtimes like Ollama and vLLM — if it speaks the protocol, Selene can talk to it.

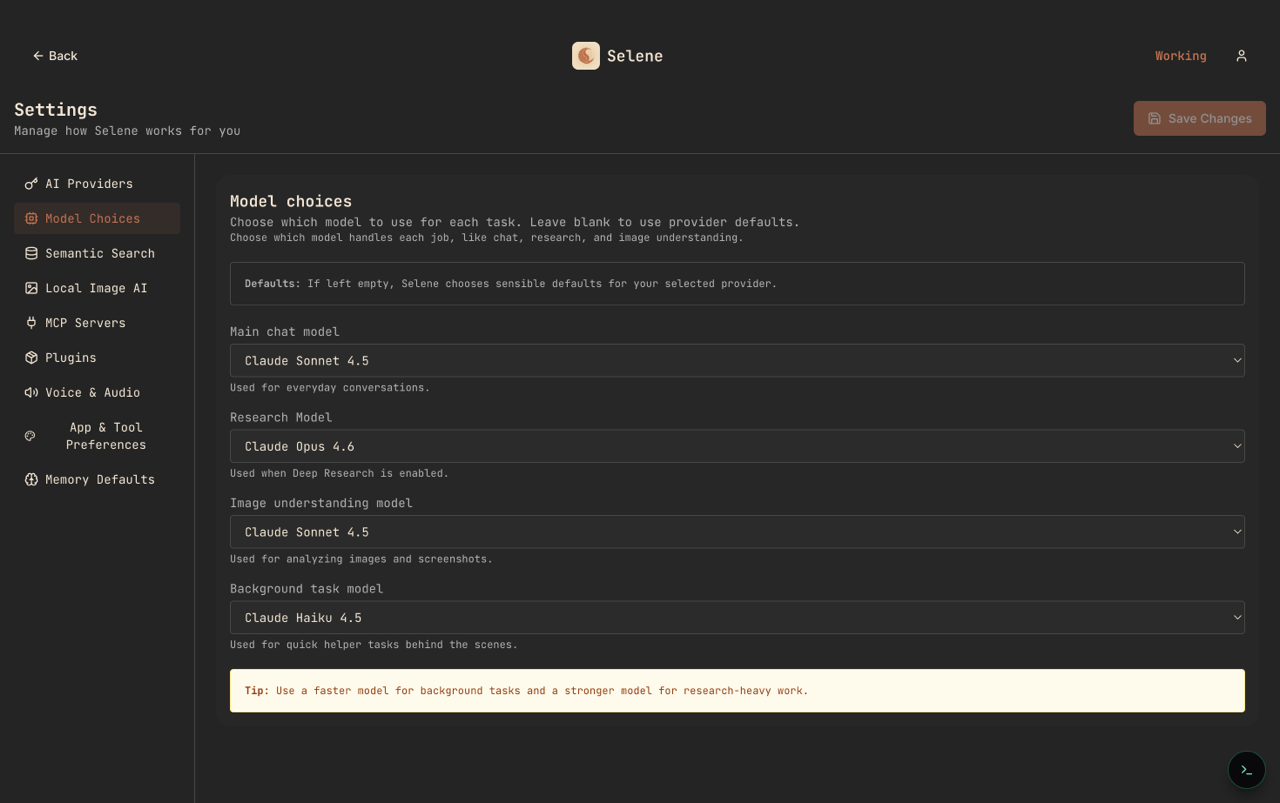

Model-agnostic, per-task

No single model is best at every job. Selene lets you assign different models to different roles — chat, deep research, image understanding, and the small background tasks the agent runs on your behalf. That matters in two directions. On good days, you use the right model for the right job: a fast, cheap model for retrieval, a larger one for reasoning, a local one for anything sensitive. On hard days, when one provider is degraded or down, the rest of the workflow keeps moving.

Open tool protocol, not a walled marketplace

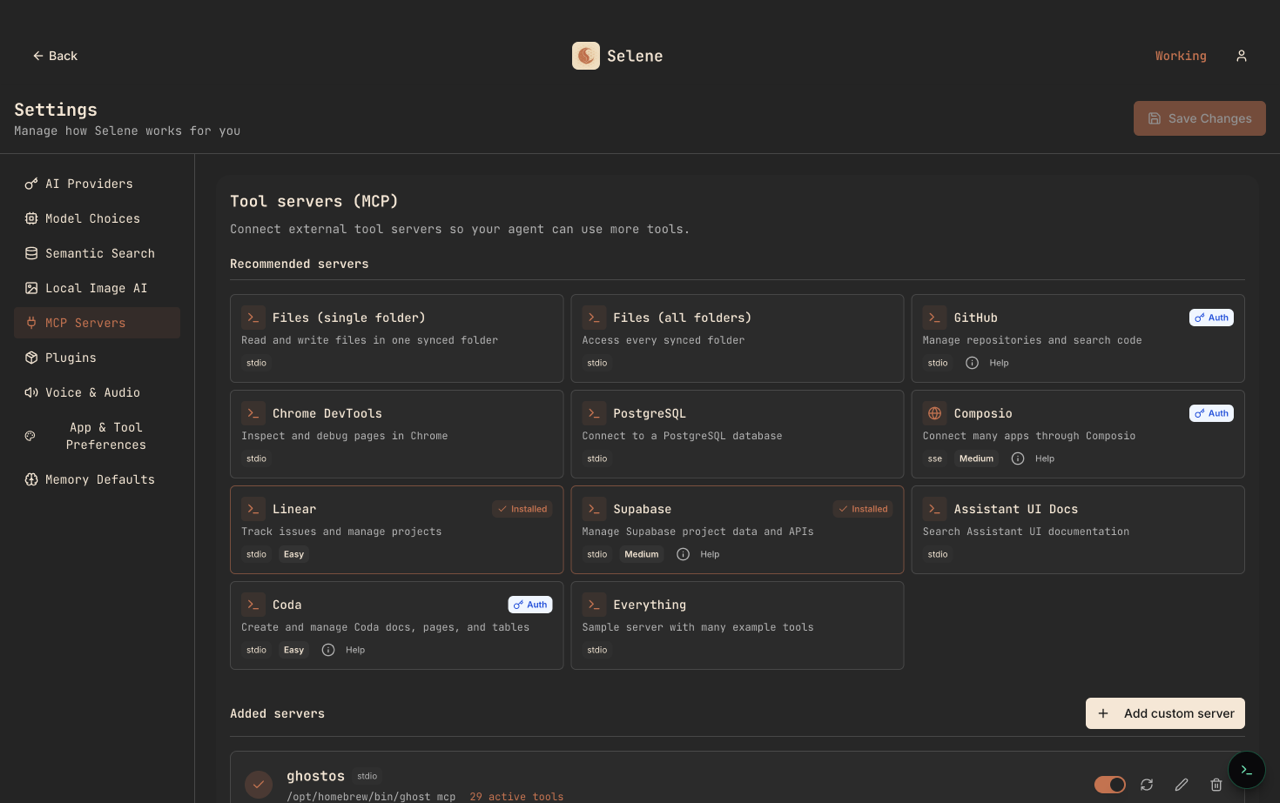

Selene speaks the Model Context Protocol. That means every tool server you already use elsewhere — filesystem, databases, GitHub, Linear, Supabase, your own custom stdio servers — works here without rewriting. If a vendor ships a better MCP server tomorrow, you swap it in. If you build your own, it runs locally and never has to be published to someone else's store.

Real multi-agent orchestration

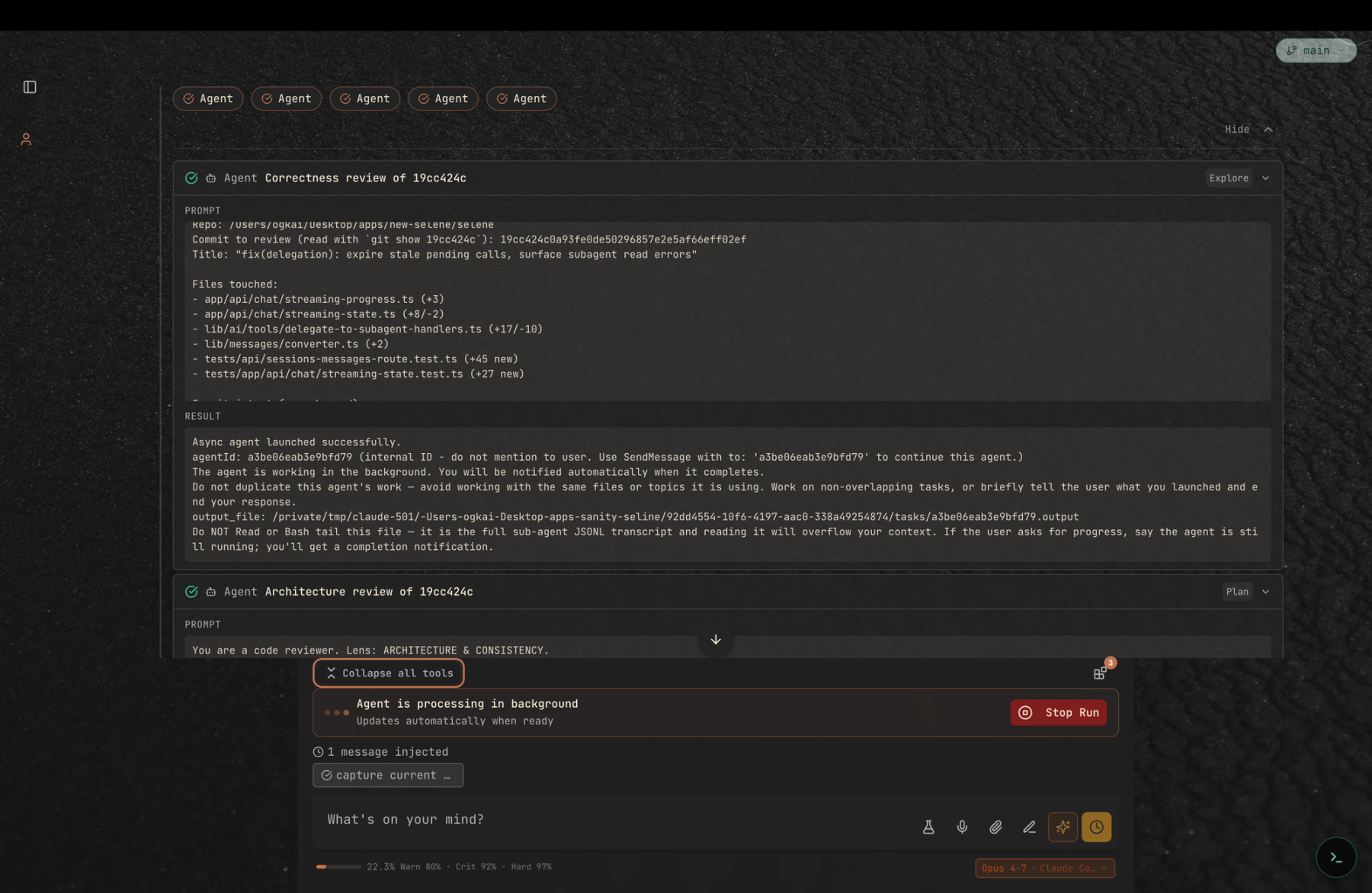

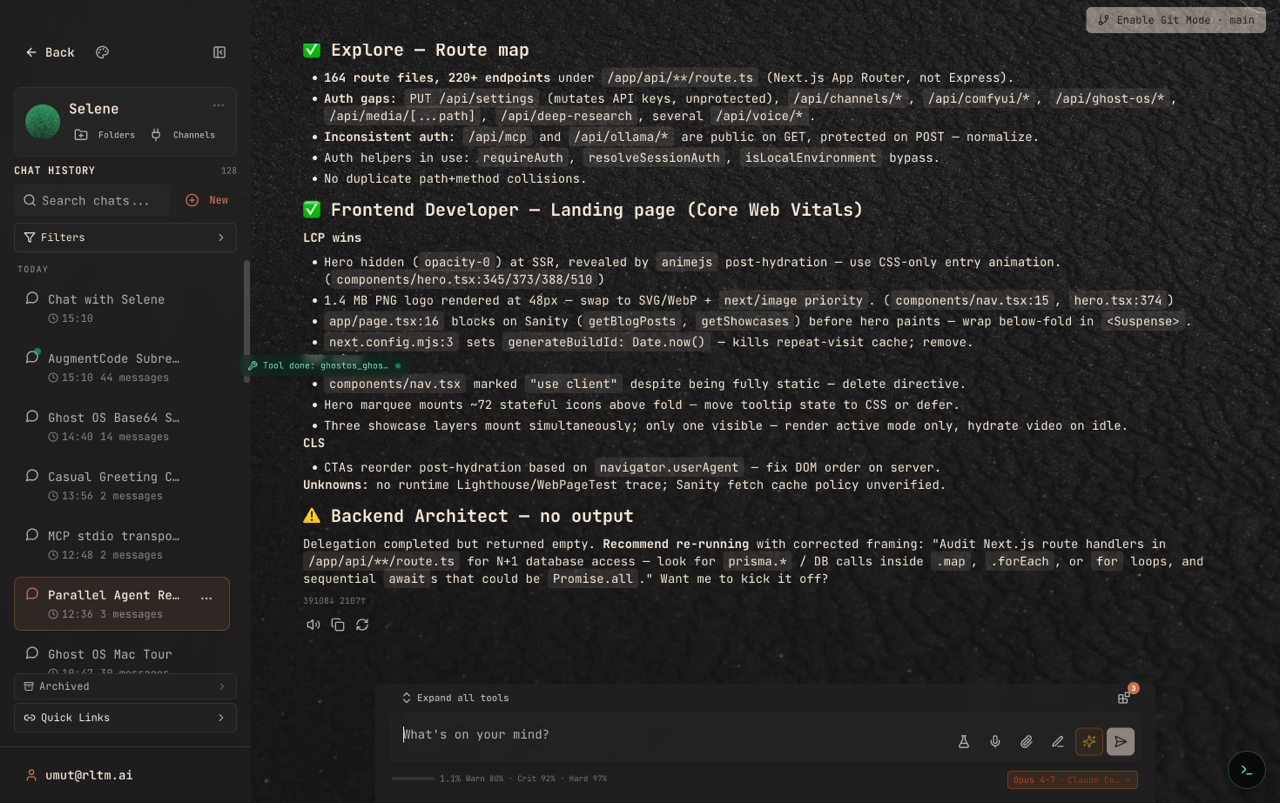

Most AI tools call themselves "agents" but run a single linear loop. Selene runs a workflow system: a top-level agent can delegate a task to one or more specialist subagents in parallel, each on its own model, each with its own session state. Their results stream back as structured messages you can inspect, re-run, or compose with. The screenshot below is from a real session where Explore, Frontend Developer, and Backend Architect subagents were working on different parts of the same audit simultaneously.

What this is not

This is not an argument that closed, credit-priced AI tools are badly built. Several of them are excellent, and their teams have pushed the whole category forward. It is also not an argument that open source is always the right answer for everyone — plenty of developers would rather pay a flat fee and not think about infrastructure, and that is a reasonable tradeoff.

What we are saying is narrower: if the agent platform is becoming a core piece of how you and your team work, it is worth asking what happens when any detail of it changes. Open source, BYOK, model-agnostic orchestration, an open tool protocol, and real multi-agent delegation are our answers to that question. They are not the only valid answers. They are the ones we wanted to be able to offer.

Try Selene

Selene is free to self-host from github.com/tercumantanumut/selene. You can run it locally, connect your existing provider keys, and bring over agents, skills, and memory from most other tools in a single session. If you want a guided path — including a migration checklist for people coming from other AI coding platforms — we are publishing a companion series over the next month that walks through it one pain point at a time.

Thanks to every developer whose thinking-out-loud in public shaped how we reason about this. The conversations in communities like r/AugmentCodeAI, r/cursor, and the Claude subreddits are why the category keeps getting better — for the closed tools and the open ones alike.